💡 Contributed by Bytedance

This case study was contributed by Bytedance Volcano Engine LAS (Lake for AI Service) Team

As autonomous driving technology proliferates, unstructured data like camera-captured images, LiDAR-generated point clouds, and microphone-collected audio surges. This massive, diverse, and real-time data demands underlying storage and processing technologies with high efficiency and speed.

Volcano Engine’s multimodal data lake solution is an intelligent data infrastructure for the AI era, comprehensively covering lake computing, storage, management, and analytics. The LAS (Lake for AI Service) enables unified, fine-grained management of unstructured data assets (text, images, audio/video) while providing end-to-end intelligent data services for model pre-training, post-training, and AI application development.

Recently, Volcano Engine LAS has been applied and implemented in autonomous driving scenarios. This article focuses on the "auto driving" scenario, detailing how LAS’s core storage format—Lance, the open lakehouse format for multimodal AI—rapidly constructs a next-gen AI data lake to efficiently store, manage, and process multimodal data (text, images, audio/video).

Background

Client A is a leading Chinese automotive enterprise specializing in Intelligent Connected Vehicle (ICV) scenarios. This solution addresses their challenges in managing and processing massive multimodal data (text/images/point clouds) by leveraging the Lance-based AI data lake. Breakthroughs are achieved through three core technologies:

- Zero-Cost Data Evolution: Adding new data columns for dynamic labeling without rewriting historical datasets, reducing storage costs by 30%.

- Transparent Compression: ZSTD encoding achieves 70% compression for point cloud data, minimizing network bandwidth pressure.

- Point Query Optimization: Column projection and lightweight shuffle boost training efficiency, reaching 96% GPU utilization.

Deployed at an automotive client, the solution improved EB-scale data processing efficiency by 3× and accelerated model training delivery by 40%. Below are technical details.

Challenges

Client A faced:

- Data Explosion: Real-time multimodal data collection (cameras, LiDAR) generates TBs/day per test vehicle, scaling to EB-level with mass production. Unstructured data (e.g., driving videos) require conversion to structured insights (object detection, path planning).

- Core Issues:

- Storage: Reduce costs without compromising point/range query performance.

- Compute: Efficiently scale from single-node experiments to production engineering.

- Retrieval: Quickly extract business value from massive unstructured data.

- Management: Track data pipelines for continuous optimization.

Solution Architecture: Lance-Driven Upgrade

Advantage 1: Data Mining & Management

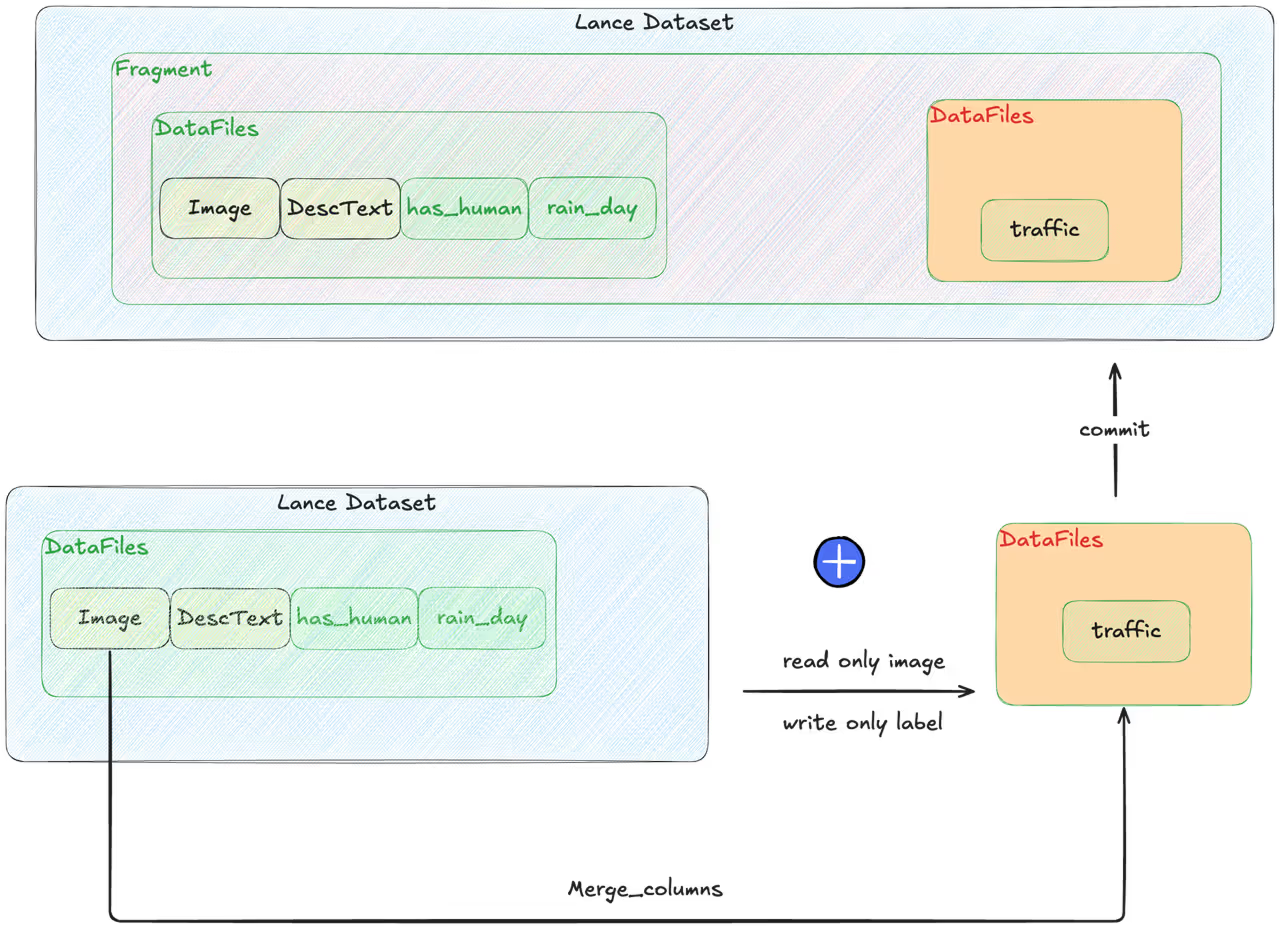

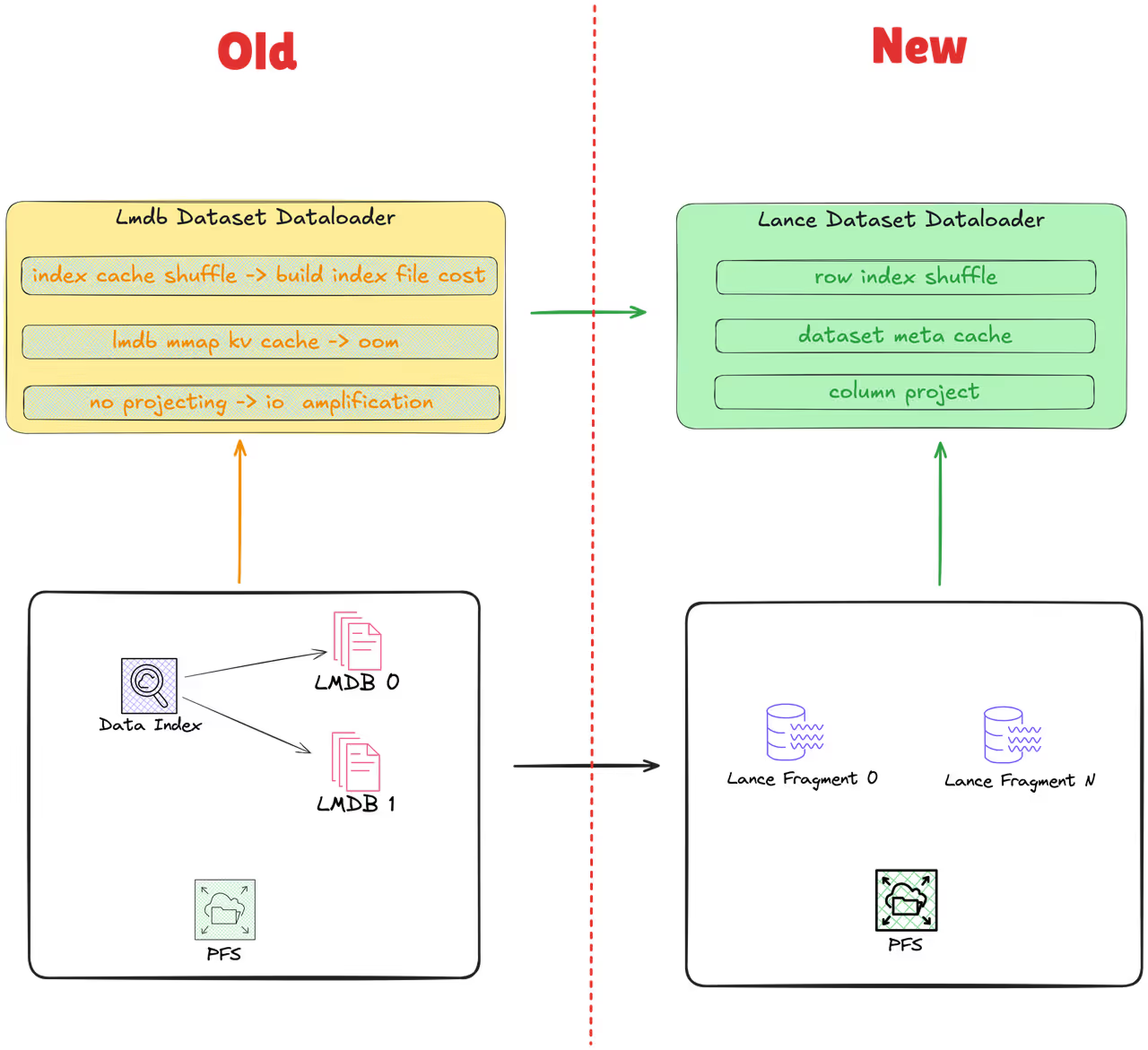

- Pain Point: LMDB required full dataset rewrite for new label columns, causing storage bloat and GPU waste.

- Lance Solution: Unified metadata management supports incremental updates.

Advantage 2: Model Training Optimization

- Pain Point: Traditional methods suffered I/O amplification and memory bloat, limiting GPU utilization to 60%.

- Lance Solution: Point queries enable lightweight data shuffle and column projection, reading only essential fields.

Lance Core Advantages

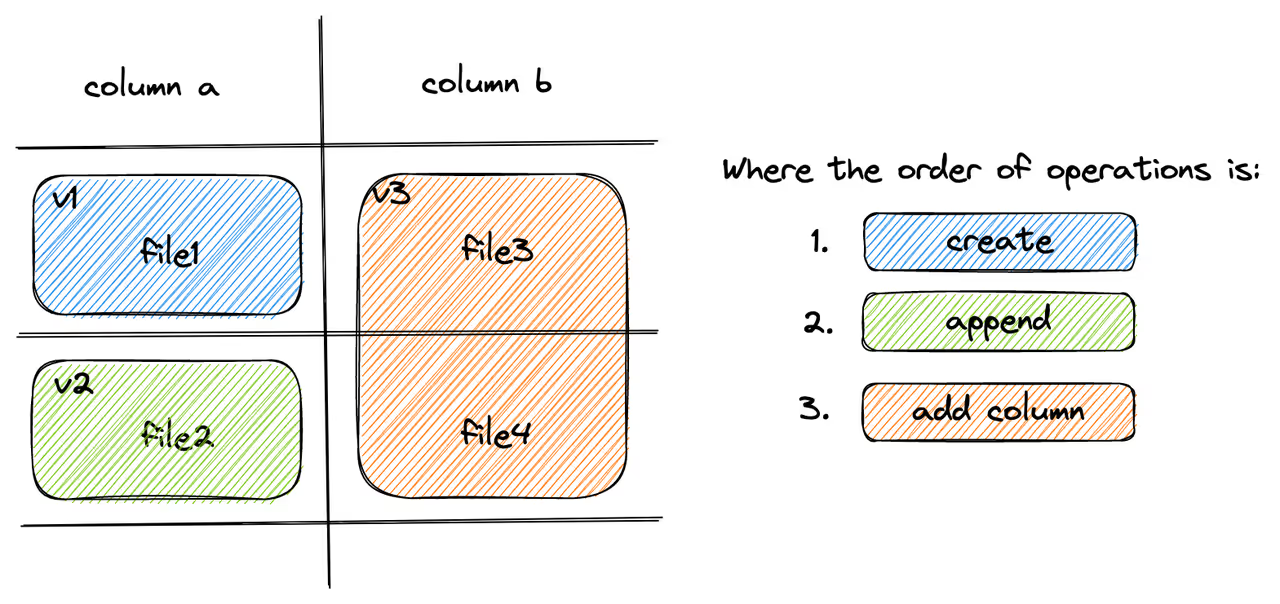

Zero-Cost Data Evolution

In intelligent driving scenarios, data annotation precision determines the upper limit of the model. Lance offers zero-cost data evolution that robustly supports dynamic annotation scenarios:

- Automatic traffic element annotation: Traffic lights, road signs, etc.

- Dynamic actor annotation: Pedestrian and vehicle trajectories.

- Environmental condition annotation: Lighting, precipitation, visibility.

When fine-tuning models using datasets corresponding to specific scenarios, it's necessary to filter datasets for particular scenarios based on certain labels. This requires label data, such as whether an image depicts cloudy weather or includes pedestrians.

The automatic labeling process for these tags essentially involves adding columns to the dataset.

Traditional methods (such as LMDB or Pickle) require rewriting the entire dataset when adding new columns, consuming significant resources. Lance, however, supports rapid schema evolution through Manifest metadata. It's very light and high-performance.

Client Results:

- 50% inference throughput gain (8×A100 GPU: 60% → 90%).

- 3× end-to-end efficiency (10PB label processing: 4 days → 1 day).

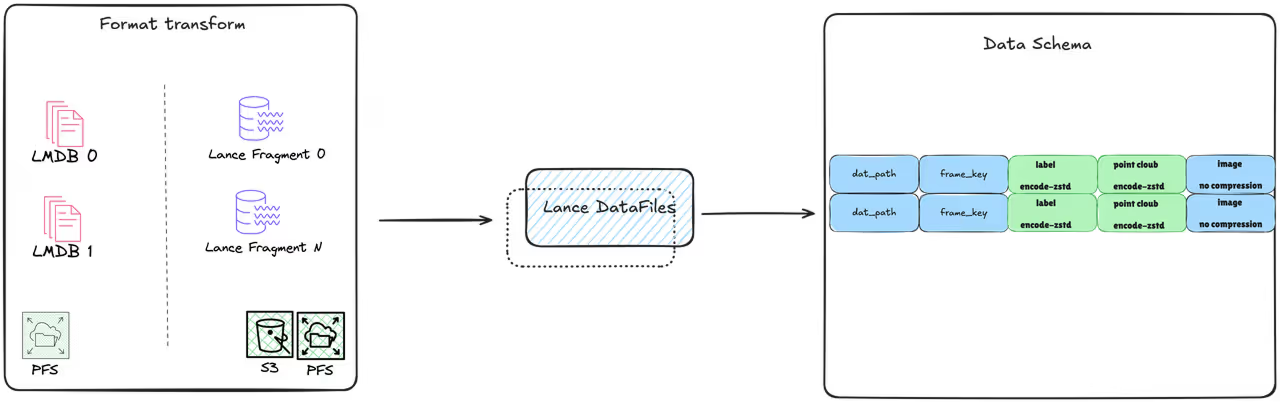

Transparent Compression

Lance uses ZSTD encoding for high compression ratios on point clouds/labels, reducing storage/bandwidth transparently.

Moreover, Lance's compression is defined in the schema, making it transparent and imperceptible during data writes or reads, thus significantly improving usability.

Point Query for AI Training

Lance optimizes training bottlenecks:

Conclusion

Lance achieves breakthroughs in auto driving data management, training efficiency, and cost optimization. With Zero-Cost Data Evolution, transparent compression, and point queries, clients gain 3× PB-scale data processing efficiency and >90% GPU utilization.

Join the Lance community to build next-gen AI data infrastructure!

- Documentation: www.lance.org

- Discord: discord.gg/lance